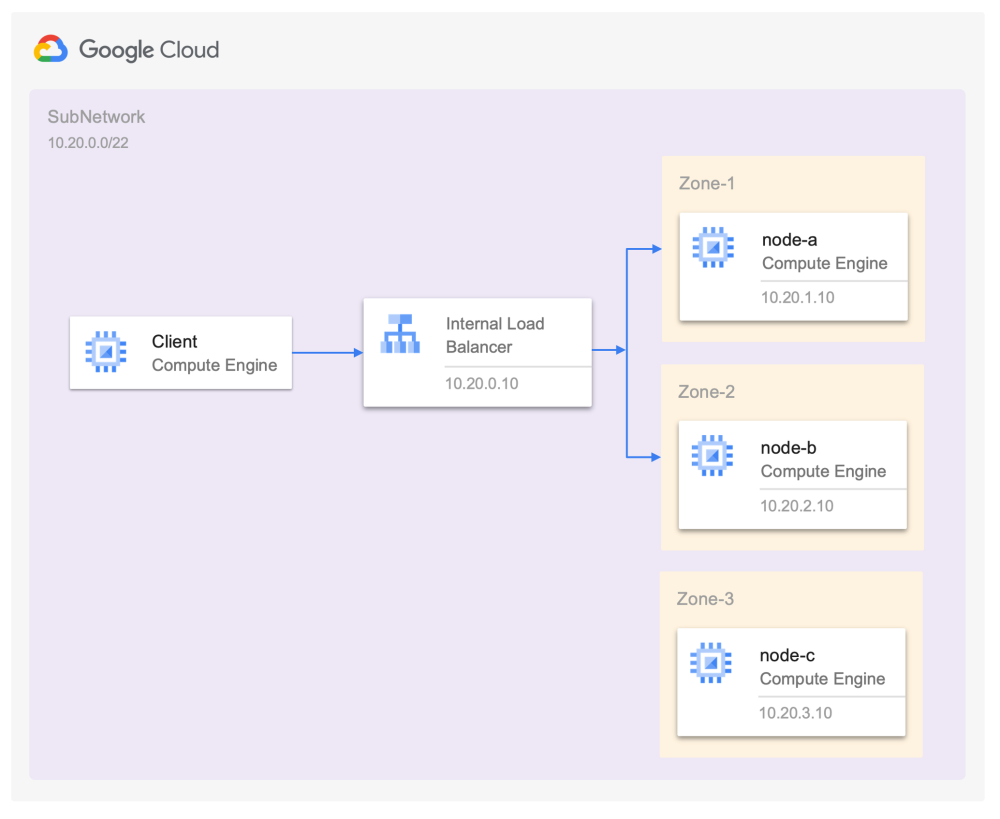

Here we will discuss how to switch between nodes using GCP’s Internal Load Balancer by creating an Internal Load Balancer that routes traffic to the active node. Clients connect to the Frontend IP address provided by Internal Load Balancer. The Internal Load Balancer regularly checks the health of each VM in the backend pool using a user-defined “Health Check Probe” function, and then routes client requests to the active node.

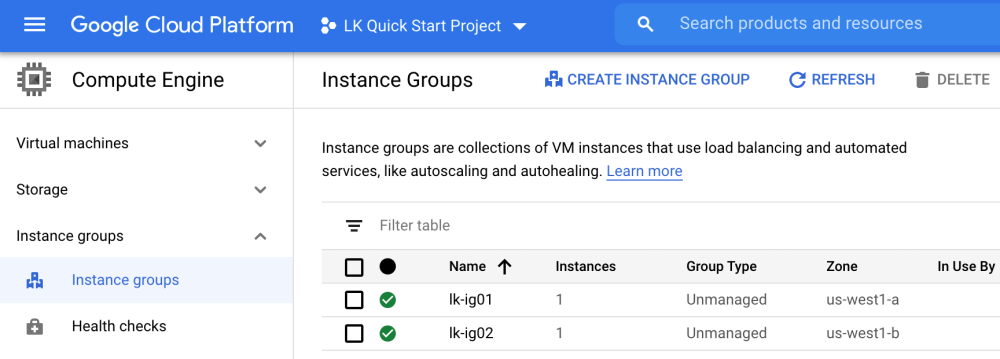

Create Instance Groups

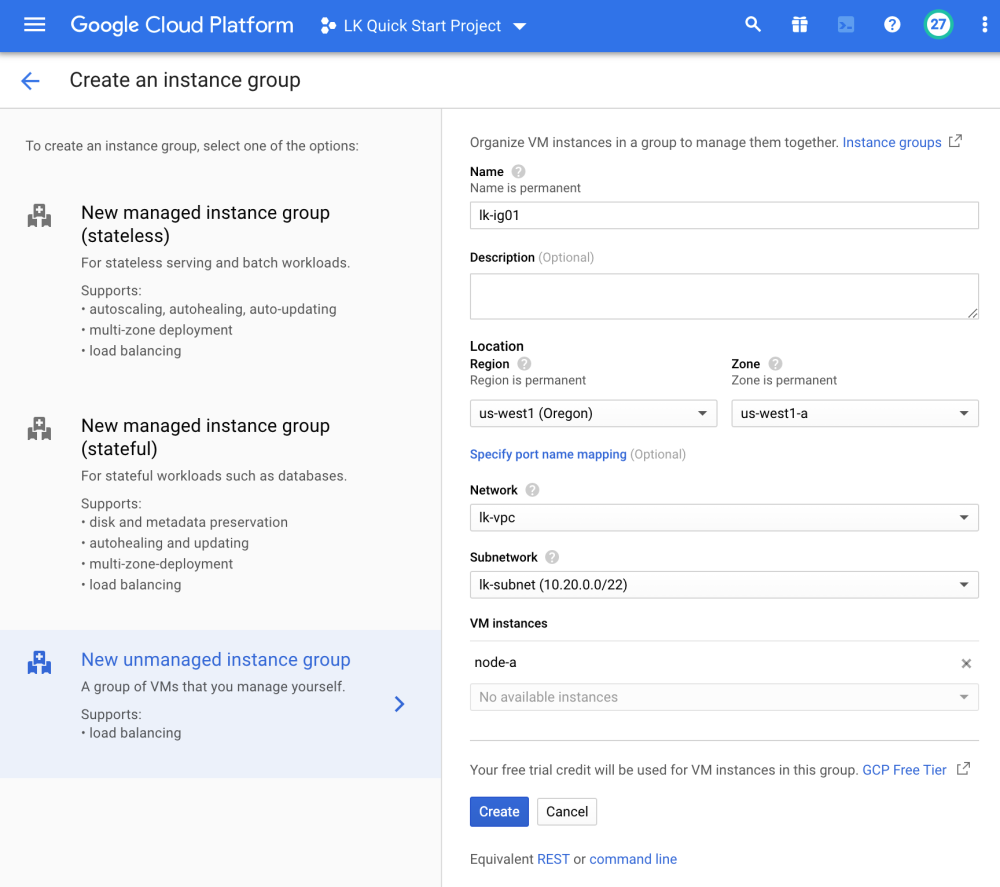

In order to use an Internal Load Balancer, the first step is to create Instance Groups containing each of our nodes. Create Instance Groups with the following parameters:

| lk-ig01 | us-west1 | a | lk-vpc | lk-subnet | node-a |

| lk-ig02 | b | node-b |

- Select “Compute Engine” > “Instance Group” from the navigation menu.

- Select “CREATE INSTANCE GROUP”, “New Unmanaged instance group”, then enter the parameters listed in the table.

- Create lk-ig02 following the same steps.

- Two Instance groups are now created.

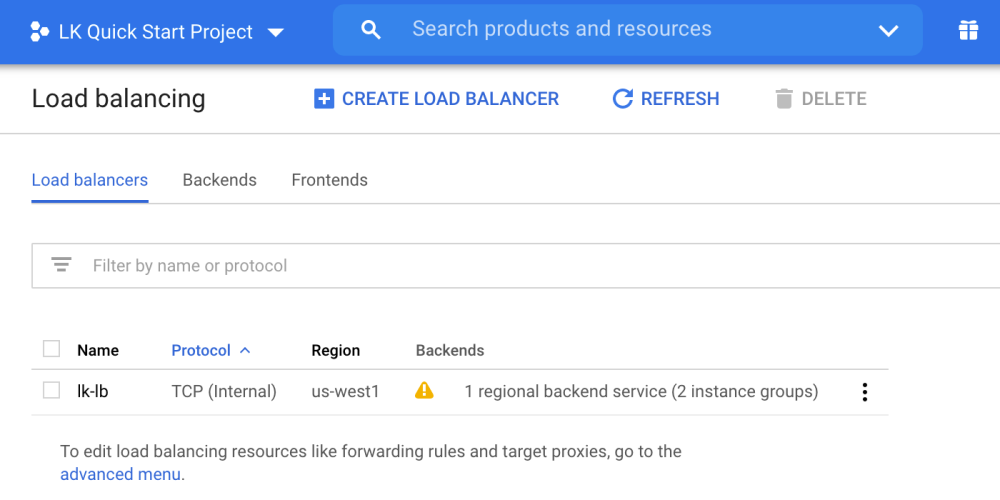

Create an Internal Load Balancer

An internal load balancer distributes traffic to the active node and can be created using the following steps. In order to configure the load balancer, start the application on node-a (to ensure the load balancer is working before configuring it through LifeKeeper).

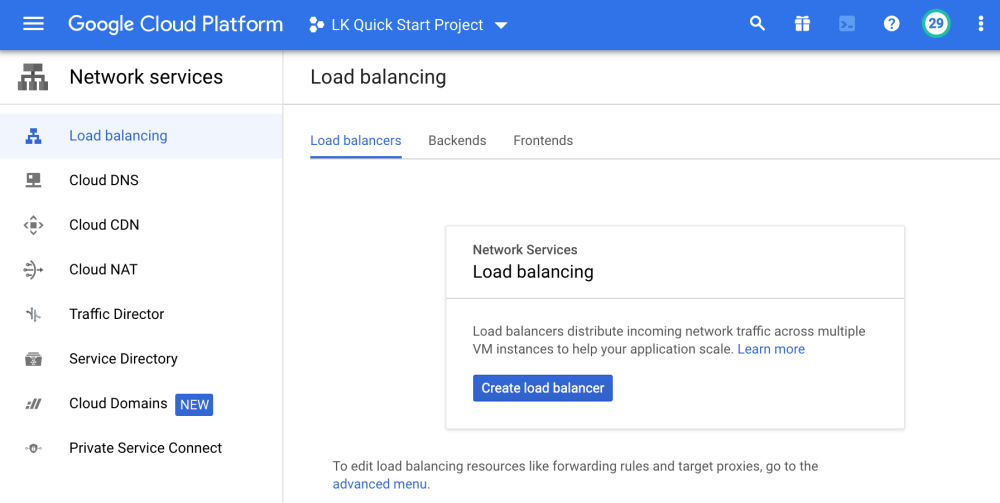

- On the navigation menu, select “Network Service” > “Load Balancing”.

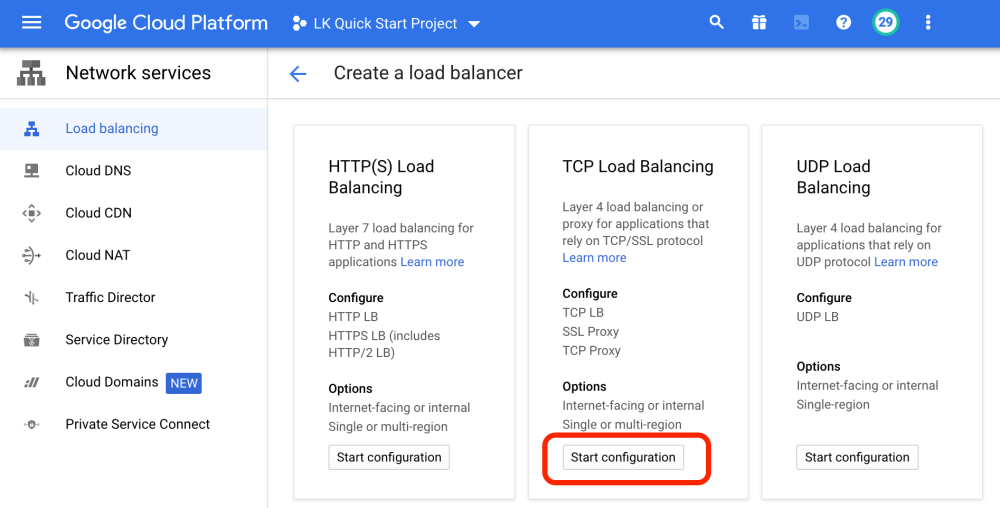

- Click “Start Configuration” under “TCP Load Balancing”.

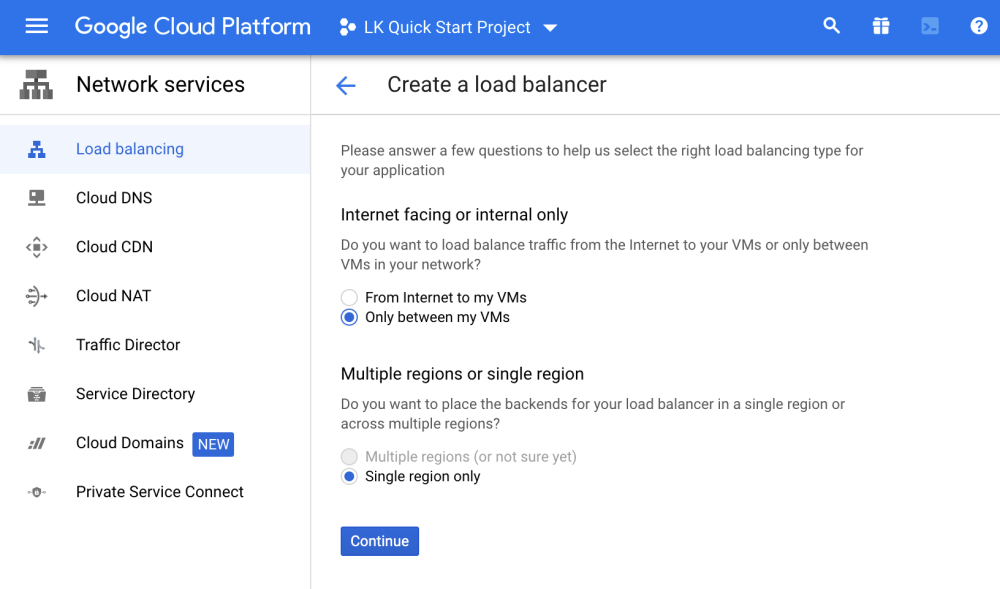

- Select “Only between my VMs” (meaning Internal Load Balancer), and Single region only. Click “Continue”.

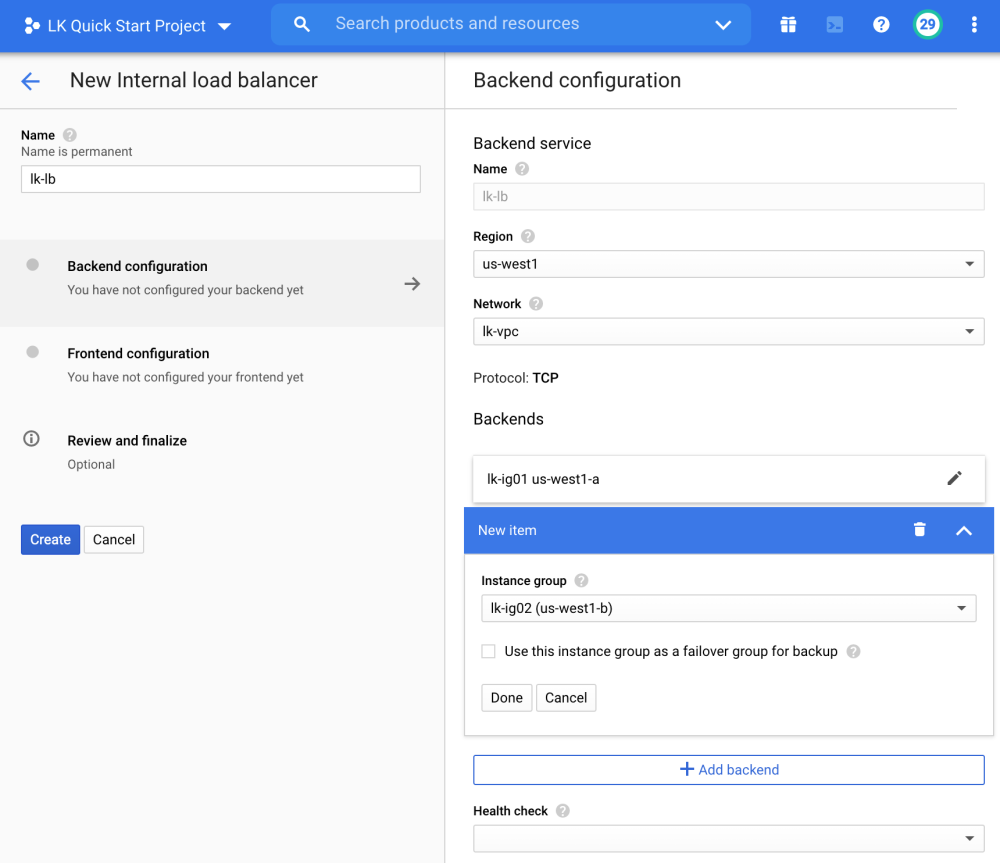

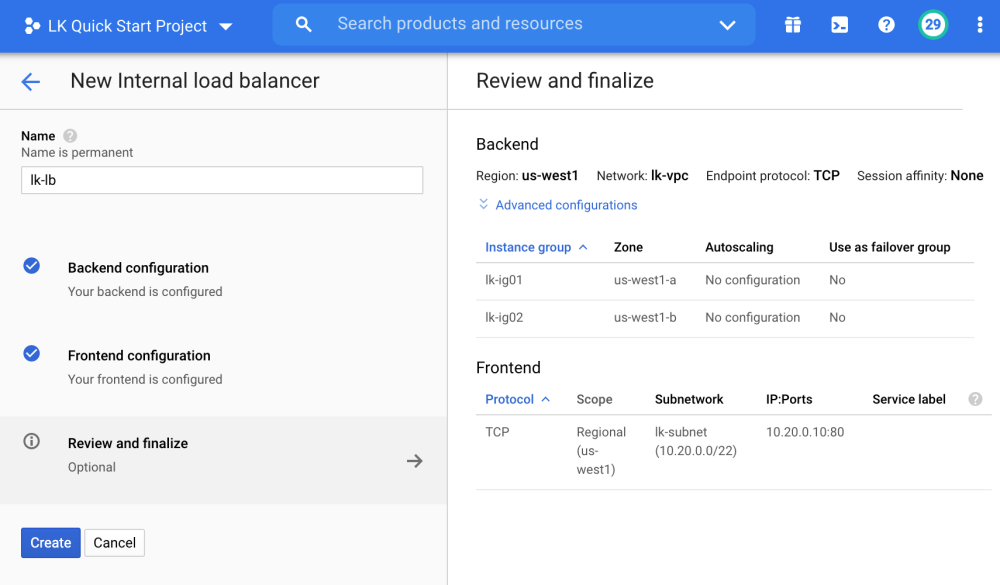

- Specify the name as lk-lb, then configure the backend parameters. Select Region, Network as lk-vpc, then select two instance groups (lk-ig01 and lk-ig02) from the dropdown menu to be added to the backend pool.

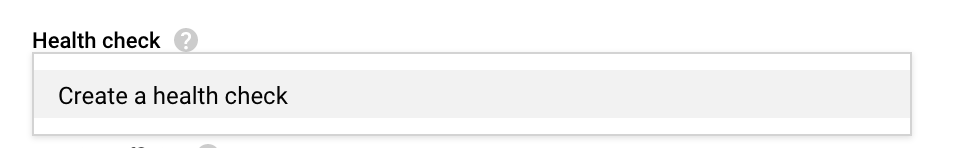

- Move on to “Health check” located at the bottom of the Backend configuration page. Select “Create a health check”.

- The health check configuration screen appears. Select the name lk-health-check, select Port (make sure it matches with the service you are going to use), then review the other parameters and click “Save and continue”.

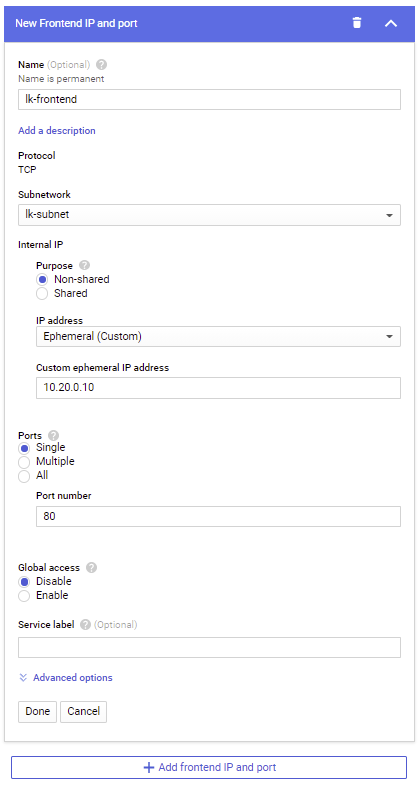

- Move to the “Frontend configuration” tab. Provide the name and subnetwork for the frontend. In this example we will use the name lk-frontend and select the lk-subnet subnetwork. Under “IP address”, choose “Ephemeral (Custom)” and specify the custom ephemeral IP address to be used by the load balancer frontend. In this example we will use IP address 10.20.0.10. Under “Ports”, select either “Single”, “Multiple”, or “All” as appropriate, based on the application that traffic is being routed to, and enter any required ports. In this example we will forward traffic on TCP port 80.

- Move on to the “Review and Finalize” tab and click “Create”.

- Once the Load Balancer is created, the status will be displayed. As the application (such as httpd) runs on only one of these nodes, you may see the

icon, but this behavior is expected.

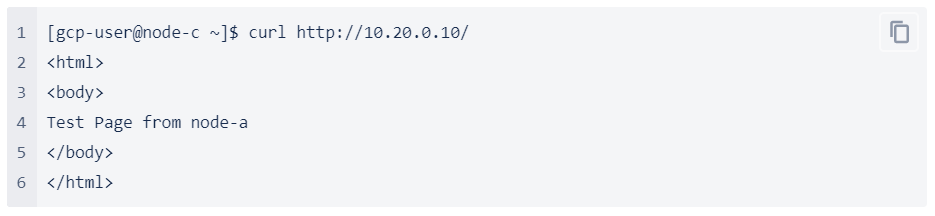

- Now the Internal Load Balancer is configured. Once you install the application to protect on node-a, you can connect to it through the frontend IP (10.20.0.10) of the ILB you have just configured. The following example shows how to check the current target for http traffic via the ILB.

Enabling Load Balancer Traffic from Backend Servers

In certain configurations, two applications which are hosted on different backend servers of an internal load balancer may need to communicate through the frontend IP of the load balancer itself. This happens, for example, when using internal load balancers to manage floating IP failover for ASCS and ERS instances in an SAP AS ABAP deployment.

As a consequence of how internal load balancers are implemented in Google Cloud, traffic that is sent from a backend server of a load balancer to the frontend IP of the load balancer will always be routed back to the same backend server that it originated from, regardless of whether it is considered healthy or not by the load balancer’s health checks. See the Traffic is sent to unexpected backend VMs section of Troubleshooting Internal TCP/UDP Load Balancing for more details.

The following steps describe a workaround for this behavior. These steps must be performed on all VM’s which will be added as backend targets for the load balancer.

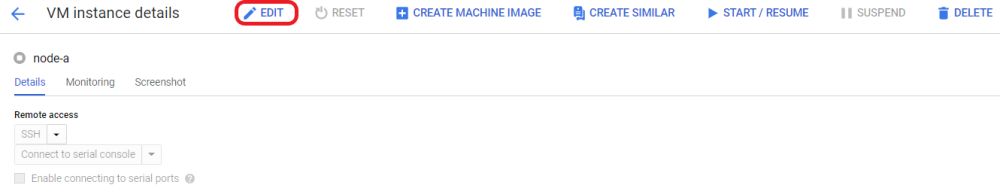

- In the Google Cloud Console, click the VM instance and select Edit from the top menu.

- In the Custom metadata section, add a new key-value pair with key “startup-script”. Add the contents of the following script to the value field:

#!/bin/bash

nic0_mac="$(curl -H "Metadata-Flavor:Google" \

http://169.254.169.254/computeMetadata/v1/instance/network-interfaces/0/mac)"

for nic in $(ls /sys/class/net); do

nic_addr="$(cat /sys/class/net/$nic/address)"

if [ "$nic_addr" == "$nic0_mac" ]; then

nic0_name="$nic"

break

fi

done

if [ -n $nic0_name ]; then

if ! grep -q "net.ipv4.conf.${nic0_name}.accept_local=1" /etc/sysctl.conf; then

echo "net.ipv4.conf.${nic0_name}.accept_local=1" >> /etc/sysctl.conf

sysctl -p

fi

ip rule add pref 0 from all iif ${nic0_name} lookup local

ip rule del from all lookup local

ip route add local 127.0.0.0/8 dev lo proto kernel \

scope host src 127.0.0.1 table main

ip route add local 127.0.0.1 dev lo proto kernel \

scope host src 127.0.0.1 table main

ip route add broadcast 127.0.0.0 dev lo proto kernel \

scope link src 127.0.0.1 table main

ip route add broadcast 127.255.255.255 dev lo proto kernel \

scope link src 127.0.0.1 table main

fi- Click Save at the bottom of the page to save the changes.

- Once the changes have been saved successfully, reboot the VM to allow the changes to take effect.

See the Test GenLB Resource Switchover and Failover section of Responding to Load Balancer Health Checks for details on how to test this behavior.

このトピックへフィードバック