Fusion-io Best Practices for Maximizing DataKeeper Performance

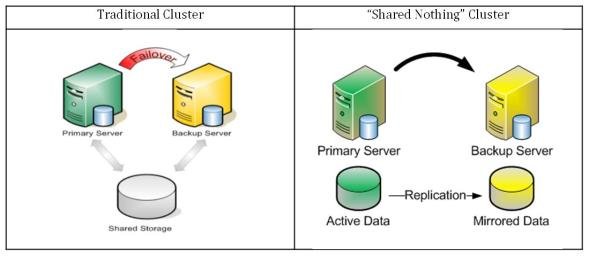

SPS for Linux includes integrated, block level data replication functionality that makes it very easy to set up a cluster when there is no shared storage involved. Using Fusion-io, SPS for Linux allows you to form “shared nothing” clusters for failover protection.

When leveraging data replication as part of a cluster configuration, it is critical that you have enough bandwidth so that data can be replicated across the network just as fast as it is being written to disk. The following best practices will allow you to get the most out of your “shared nothing” SPS cluster configuration when high-speed storage is involved:

Network

- Use a 10 Gbps NIC: Flash-based storage devices from Fusion-io (or other similar products from OCZ, LSI, etc.) are capable of writing data at speeds of HUNDREDS (750+) MB/sec or more. A 1 Gbps NIC can only push a theoretical maximum of approximately 125 MB/sec, so anyone taking advantage of an ioDrive’s potential can easily write data much faster than 1 Gbps network connection could replicate it. To ensure that you have sufficient bandwidth between servers to facilitate real-time data replication, a 10 Gbps NIC should always be used to carry replication traffic.

- Enable Jumbo Frames: Assuming that your network cards and switches support it, enabling jumbo frames can greatly increase your network’s throughput while at the same time reducing CPU cycles. To enable jumbo frames, perform the following configuration (example on a Red Hat/CentOS/OEL Linux distribution):

º Run the following command:

ip link set <interface_name> mtu 9000

º To ensure change persists across reboots, add “MTU=9000” to the following file:

/etc/sysconfig/network-scripts/ifcfg-<interface_name>

º To verify end-to-end jumbo frame operation, run the following command:

ping -s 8900 -M do <IP-of-other-server>

- Change the NIC’s transmit queue length:

º Run the following command:

ip link set <interface_name> txqueuelen 10000

º To preserve the setting across reboots, add to /etc/rc.local.

- Change the NIC’s netdev_max_backlog:

º Set the following in /etc/sysctl.conf:

net.core.netdev_max_backlog = 100000

TCP/IP Tuning

- TCP/IP tuning that has shown to increase replication performance:

º Edit /etc/sysctl.conf and add the following parameters (Note: These are examples and may vary according to your environment):

net.core.rmem_default = 16777216

net.core.wmem_default = 16777216

net.core.rmem_max = 16777216

net.core.wmem_max = 16777216

net.ipv4.tcp_rmem = 4096 87380 16777216

net.ipv4.tcp_wmem = 4096 65536 16777216

net.ipv4.tcp_timestamps = 0

net.ipv4.tcp_sack = 0

net.core.optmem_max = 16777216

net.ipv4.tcp_congestion_control=htcp

Configuration Recommendations

- Allocate a small (~100 MB) disk partition, located on the Fusion-io drive to place the bitmap file. Create a filesystem on this partition and mount it, for example, at /bitmap:

# mount | grep /bitmap

/dev/fioa1 on /bitmap type ext3 (rw)

- Prior to creating your mirror, adjust the following parameters in /etc/default/LifeKeeper:

LKDR_CHUNK_SIZE=4096 (Default value is 256)

- Create your mirrors and configure the cluster as you normally would.

- The Bitmap file must be set up to be created in the partition, which is created as above.

- Set up for faster resynchronization. Select “Set Resync Speed Limits” from right menu of DataKeeper Resource and set up the following figure to the wizard. Note: Setting the resync speeds via the UI will cause the changes to take place immediately. If they are added to the default file the resource may need to be taken in and out of service.

Minimum Resync Speed Limit: 200000

- At the same time, set up Resync speed to be allowed during other I/Os operating. This figure must be set up under the half of the maximum write speed throughput of the drive as the empirical rule not to disturb the normal I/O functions during the Resync operation.

Maximum Resync Speed Limit: 1500000

- Set up the maximum bandwidth to use during Resync. This figure must be set up with enough high figure to execute Resync with the available maximum speed.

Post your comment on this topic.