In this section we will set up a highly-available NFS mount target using the NetApp Cloud Volumes Service for Google Cloud, which is a cloud-native third-party service that integrates with Google Cloud to provide zonal high-availability for shared file systems. Complete the following steps to create a Cloud Volume, mount it on each cluster node, and prepare the shared file system for installation of the SAP instances.

- Follow the steps provided in the Quickstart for Cloud Volumes Service to purchase and enable the Cloud Volumes Service in your Google Cloud project.

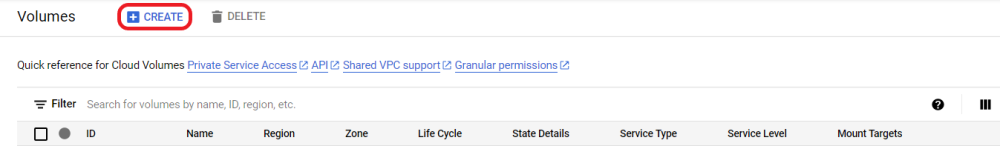

- Once the Cloud Volumes Service has been successfully enabled, navigate to the Cloud Volumes → Volumes page and click Create at the top to create a new Cloud Volume.

- Enter the following parameters and click Save at the bottom of the page to create the Cloud Volume. The

icon indicates that the default option is chosen.

| Volume Name | cv-sapnfs |

| Service Type | CVS |

| Region | <Deployment region> (e.g., us-east1) |

| Zone | <Zone> (e.g., us-east1b) |

| Volume Path | cv-sapnfs |

| Service Level | Standard-SW |

| Volume Details | 1024 GiB |

| Protocol Type | NFSv3 |

| Make snapshot directory visible | Unchecked |

| Shared VPC configuration | Unchecked |

| Use Custom Address Range | Unchecked |

| Export Rule 1 | Allow ‘Read & Write’ on 10.20.0.0/22 Note: We are exposing the NFS export to the entire lk-subnet subnetwork for simplicity, but the scope of the export rules can be made more fine-grained to only allow access from the cluster nodes which are mounting the NFS share. |

| Allow automatic snapshots | Unchecked |

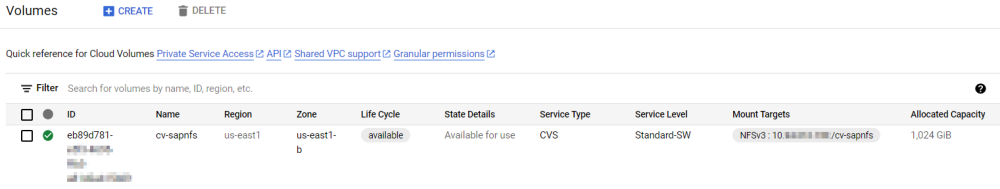

The cv-sapnfs Cloud Volume will appear on the Cloud Volumes → Volumes page once it has been successfully provisioned.

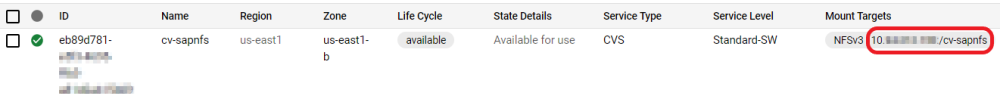

- Note the Mount Target for the NFS export. This will be used to mount the shared file system on the cluster nodes. In the following steps we will use <Mount Target IP> to refer to the IP address of the NFS server listed here.

- Execute the following commands on both node-a and node-b to create the /sapnfs mount point for the cv-sapnfs NFS share. Add the given mount entry to /etc/fstab on both nodes (replacing <Mount Target IP> with the IP obtained from the Cloud Volumes page in the previous step) so that each cluster node mounts the shared file system at boot.

# mkdir /sapnfs # echo “<Mount Target IP>:/cv-sapnfs /sapnfs nfs nfsvers=3,proto=tcp,rw,sync,bg 0 0” >> /etc/fstab # mount -a

Verify that the file system has been mounted successfully on both nodes.

# df -h | grep sapnfs <Mount Target IP>:/cv-sapnfs 1.0T 1.2G 1023G 1% /sapnfs

- Execute the following command on node-a to create the subdirectories within /sapnfs which will contain the shared SAP files.

[root@node-a ~]# mkdir -p /sapnfs/{sapmnt/SPS,usr/sap/trans,usr/sap/SPS/{ASCS10,ERS20}}

Verify that the subdirectories were created successfully.

[root@node-a ~]# du -a /sapnfs 0 /sapnfs/sapmnt/SPS 0 /sapnfs/sapmnt 0 /sapnfs/usr/sap/trans 0 /sapnfs/usr/sap/SPS/ASCS10 0 /sapnfs/usr/sap/SPS/ERS20 0 /sapnfs/usr/sap/SPS 0 /sapnfs/usr/sap 0 /sapnfs/usr 0 /sapnfs

- Execute the following commands on both node-a and node-b to create soft links from the SAP file systems to their corresponding subdirectories under /sapnfs.

# mkdir -p /sapmnt /usr/sap/SPS # ln -s /sapnfs/sapmnt/SPS /sapmnt/SPS # ln -s /sapnfs/usr/sap/SPS/ASCS10 /usr/sap/SPS/ASCS10 # ln -s /sapnfs/usr/sap/SPS/ERS20 /usr/sap/SPS/ERS20

Verify that the soft links have been created successfully on each node.

# ls -l /sapmnt /usr/sap/SPS

/sapmnt:

total 0

lrwxrwxrwx 1 root root 01 Jan 1 00:00 SPS -> /sapnfs/sapmnt/SPS

/usr/sap/SPS:

total 0

lrwxrwxrwx 1 root root 01 Jan 1 00:00 ASCS10 -> /sapnfs/usr/sap/SPS/ASCS10

lrwxrwxrwx 1 root root 01 Jan 1 00:00 ERS20 -> /sapnfs/usr/sap/SPS/ERS20The shared file systems are now prepared for installation of the SAP instances.

Post your comment on this topic.