In this section we will create highly-available NFS shared file systems which will be mounted on each node hosting an SAP instance. We will also create SIOS DataKeeper mirrors to replicate data for the ASCS10 and ERS20 instances between node-a and node-b. Typical file systems to be shared or replicated between systems in a highly-available SAP AS ABAP environment include:

- /sapmnt/<SID>

- /usr/sap/trans

- /usr/sap/<SID>/ASCS<InstNum>

- /usr/sap/<SID>/ERS<InstNum>

See Setting up File Systems for a High-Availability System for more details.

- Create and attach four additional disks to node-a and node-b to support the highly-available SAP installation. The device names used in this example may vary depending on your environment (e.g., /dev/xvdb instead of /dev/sdb), so adjust the commands given in the section accordingly to use the appropriate device names. Note that these disk sizes are used for evaluation purposes only. Consult the relevant documentation from SAP, your cloud provider, and any third-party storage provider when provisioning resources in a production environment.

| /dev/sdb | 10GB | /export/sapmnt/SPS |

| /dev/sdc | 10GB | /export/usr/sap/trans |

| /dev/sdd | 10GB | /usr/sap/SPS/ASCS10 |

| /dev/sde | 10GB | /usr/sap/SPS/ERS20 |

- Execute the following commands on both node-a and node-b to create a /dev/sdb1 partition with an xfs file system.

[root@node-a ~]# parted /dev/sdb --script mklabel gpt mkpart xfspart xfs 0% 100%

[root@node-a ~]# mkfs.xfs /dev/sdb1

meta-data=/dev/sdb1 isize=512 agcount=4, agsize=1310592 blks

= sectsz=4096 attr=2, projid32bit=1

= crc=1 finobt=1, sparse=1, rmapbt=0

= reflink=1

data = bsize=4096 blocks=5242368, imaxpct=25

= sunit=0 swidth=0 blks

naming =version 2 bsize=4096 ascii-ci=0, ftype=1

log =internal log bsize=4096 blocks=2560, version=2

= sectsz=4096 sunit=1 blks, lazy-count=1

realtime =none extsz=4096 blocks=0, rtextents=0

[root@node-a ~]# partprobe /dev/sdb1Repeat these commands for /dev/sdc, /dev/sdd, and /dev/sde on both node-a and node-b.

- Execute the following command on both node-a and node-b to create the mount points for the shared and replicated SAP file systems:

[root@node-a ~]# mkdir -p /export/{usr/sap/trans,sapmnt/SPS} /sapmnt/SPS /usr/sap/{trans,SPS/{ASCS10,ERS20,SYS}}

Create DataKeeper Data Replication Resource Hierarchies

- Following the steps described in How to Create Data Replication of a File System, use the following parameters to create and extend a DataKeeper data replication resource (datarep-/export/sapmnt/SPS) to mirror the contents of the /export/sapmnt/SPS directory between node-a and node-b. Notice that the DataKeeper resource is being created on node-a and extended to node-b. Also note that the resulting resource should not be extended to node-c, the witness node. The

icon indicates that the default option is chosen.

| Create Resource Wizard | |

|---|---|

| Switchback Type | intelligent |

| Server | node-a |

| Hierarchy Type | Replicate New Filesystem |

| Source Disk | /dev/sdb1 (10.0 GB) |

| New Mount Point | /export/sapmnt/SPS |

| New Filesystem Type | xfs |

| Data Replication Resource Tag | datarep-/export/sapmnt/SPS |

| File System Resource Tag | /export/sapmnt/SPS |

| Bitmap File | /opt/LifeKeeper/bitmap__export_sapmnt_SPS |

| Enable Asynchronous Replication? | no |

| Pre-Extend Wizard | |

| Target Server | node-b |

| Switchback Type | intelligent |

| Template Priority | 1 |

| Target Priority | 10 |

| Extend scsi/netraid Resource Hierarchy Wizard | |

| Mount Point | /export/sapmnt/SPS |

| Root Tag | /export/sapmnt/SPS |

| Target Disk | /dev/sdb1 (10.0 GB) |

| Data Replication Resource Tag | datarep-/export/sapmnt/SPS |

| Bitmap File | /opt/LifeKeeper/bitmap__export_sapmnt_SPS |

| Replication Path | 10.20.1.10/10.20.2.10 |

- Following the steps described in How to Create Data Replication of a File System, use the following parameters to create and extend a DataKeeper data replication resource (datarep-/export/usr/sap/trans) to mirror the contents of the /export/usr/sap/trans directory between node-a and node-b. Notice that the DataKeeper resource is being created on node-a and extended to node-b. Also note that the resulting resource should not be extended to node-c, the witness node. The

icon indicates that the default option is chosen.

| Create Resource Wizard | |

|---|---|

| Switchback Type | intelligent |

| Server | node-a |

| Hierarchy Type | Replicate New Filesystem |

| Source Disk | /dev/sdc1 (10.0 GB) |

| New Mount Point | /export/usr/sap/trans |

| New Filesystem Type | xfs |

| Data Replication Resource Tag | datarep-/export/usr/sap/trans |

| File System Resource Tag | /export/usr/sap/trans |

| Bitmap File | /opt/LifeKeeper/bitmap__export_usr_sap_trans |

| Enable Asynchronous Replication? | no |

| Pre-Extend Wizard | |

| Target Server | node-b |

| Switchback Type | intelligent |

| Template Priority | 1 |

| Target Priority | 10 |

| Extend scsi/netraid Resource Hierarchy Wizard | |

| Mount Point | /export/usr/sap/trans |

| Root Tag | /export/usr/sap/trans |

| Target Disk | /dev/sdc1 (10.0 GB) |

| Data Replication Resource Tag | datarep-/export/usr/sap/trans |

| Bitmap File | /opt/LifeKeeper/bitmap__export_usr_sap_trans |

| Replication Path | 10.20.1.10/10.20.2.10 |

- Following the steps described in How to Create Data Replication of a File System, use the following parameters to create and extend a DataKeeper data replication resource (datarep-/usr/sap/SPS/ASCS10) to mirror the contents of the /usr/sap/SPS/ASCS10 directory between node-a and node-b. Notice that the DataKeeper resource is being created on node-a and extended to node-b. Also note that the resulting resource should not be extended to node-c, the witness node. The

icon indicates that the default option is chosen.

| Create Resource Wizard | |

|---|---|

| Switchback Type | intelligent |

| Server | node-a |

| Hierarchy Type | Replicate New Filesystem |

| Source Disk | /dev/sdd1 (10.0 GB) |

| New Mount Point | /usr/sap/SPS/ASCS10 |

| New Filesystem Type | xfs |

| Data Replication Resource Tag | datarep-/usr/sap/SPS/ASCS10 |

| File System Resource Tag | /usr/sap/SPS/ASCS10 |

| Bitmap File | /opt/LifeKeeper/bitmap__usr_sap_SPS_ASCS10 |

| Enable Asynchronous Replication? | no |

| Pre-Extend Wizard | |

| Target Server | node-b |

| Switchback Type | intelligent |

| Template Priority | 1 |

| Target Priority | 10 |

| Extend scsi/netraid Resource Hierarchy Wizard | |

| Mount Point | /usr/sap/SPS/ASCS10 |

| Root Tag | /usr/sap/SPS/ASCS10 |

| Target Disk | /dev/sdd1 (10.0 GB) |

| Data Replication Resource Tag | datarep-/usr/sap/SPS/ASCS10 |

| Bitmap File | /opt/LifeKeeper/bitmap__usr_sap_SPS_ASCS10 |

| Replication Path | 10.20.1.10/10.20.2.10 |

- Following the steps described in How to Create Data Replication of a File System, use the following parameters to create and extend a DataKeeper data replication resource (datarep-/usr/sap/SPS/ERS20) to mirror the contents of the /usr/sap/SPS/ERS20 directory between node-a and node-b. Notice that the DataKeeper resource is being created on node-b and extended to node-a. Also note that the resulting resource should not be extended to node-c, the witness node. The

icon indicates that the default option is chosen.

| Create Resource Wizard | |

|---|---|

| Switchback Type | intelligent |

| Server | node-b |

| Hierarchy Type | Replicate New Filesystem |

| Source Disk | /dev/sde1 (10.0 GB) |

| New Mount Point | /usr/sap/SPS/ERS20 |

| New Filesystem Type | xfs |

| Data Replication Resource Tag | datarep-/usr/sap/SPS/ERS20 |

| File System Resource Tag | /usr/sap/SPS/ERS20 |

| Bitmap File | /opt/LifeKeeper/bitmap__usr_sap_SPS_ERS20 |

| Enable Asynchronous Replication? | no |

| Pre-Extend Wizard | |

| Target Server | node-a |

| Switchback Type | intelligent |

| Template Priority | 1 |

| Target Priority | 10 |

| Extend scsi/netraid Resource Hierarchy Wizard | |

| Mount Point | /usr/sap/SPS/ERS20 |

| Root Tag | /usr/sap/SPS/ERS20 |

| Target Disk | /dev/sde1 (10.0 GB) |

| Data Replication Resource Tag | datarep-/usr/sap/SPS/ERS20 |

| Bitmap File | /opt/LifeKeeper/bitmap__usr_sap_SPS_ERS20 |

| Replication Path | 10.20.2.10/10.20.1.10 |

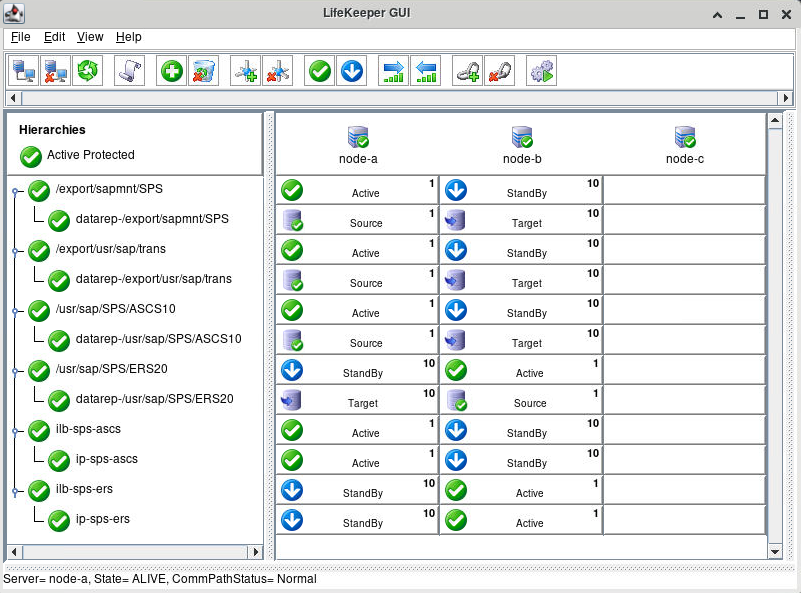

Once all of the DataKeeper data replication resource hierarchies have been created, the LifeKeeper GUI should look similar to the following image:

Create NFS Resource Hierarchies

- Add the following entries to /etc/exports on node-a:

/export/sapmnt/SPS *(rw,sync,no_root_squash) /export/usr/sap/trans *(rw,sync,no_root_squash)

- Execute the following command on node-a to export the shared file systems:

[root@node-a ~]# exportfs -rav exporting *:/export/usr/sap/trans exporting *:/export/sapmnt/SPS

- Execute the following command to verify that the shared file systems are visible from both node-a and node-b, as well as from the servers that will host the PAS and AAS instances:

# showmount -e sps-ascs Export list for sps-ascs: /export/usr/sap/trans * /export/sapmnt/SPS *

- Following the steps described in Protecting an NFS Resource, use the following parameters to create and extend an NFS resource (nfs-/export/sapmnt/SPS) to protect the shared /export/sapmnt/SPS file system. Notice that the NFS resource is being created on node-a and extended to node-b. Also note that the resulting resource should not be extended to node-c, the witness node. The

icon indicates that the default option is chosen.

| Create Resource Wizard | |

|---|---|

| Switchback Type | intelligent |

| Server | node-a |

| Export Point | /export/sapmnt/SPS |

| IP Tag | ip-sps-ascs |

| NFS Tag | nfs-/export/sapmnt/SPS |

| Pre-Extend Wizard | |

| Target Server | node-b |

| Switchback Type | intelligent |

| Template Priority | 1 |

| Target Priority | 10 |

| Extend gen/nfs Resource Hierarchy Wizard | |

| NFS Tag | nfs-/export/sapmnt/SPS |

- Following the steps described in Protecting an NFS Resource, use the following parameters to create and extend an NFS resource (nfs-/export/usr/sap/trans) to protect the shared /export/usr/sap/trans file system. Notice that the NFS resource is being created on node-a and extended to node-b. Also note that the resulting resource should not be extended to node-c, the witness node. The

icon indicates that the default option is chosen.

| Create Resource Wizard | |

|---|---|

| Switchback Type | intelligent |

| Server | node-a |

| Export Point | /export/usr/sap/trans |

| IP Tag | ip-sps-ascs |

| NFS Tag | nfs-/usr/sap/trans |

| Pre-Extend Wizard | |

| Target Server | node-b |

| Switchback Type | intelligent |

| Template Priority | 1 |

| Target Priority | 10 |

| Extend gen/nfs Resource Hierarchy Wizard | |

| NFS Tag | nfs-/export/usr/sap/trans |

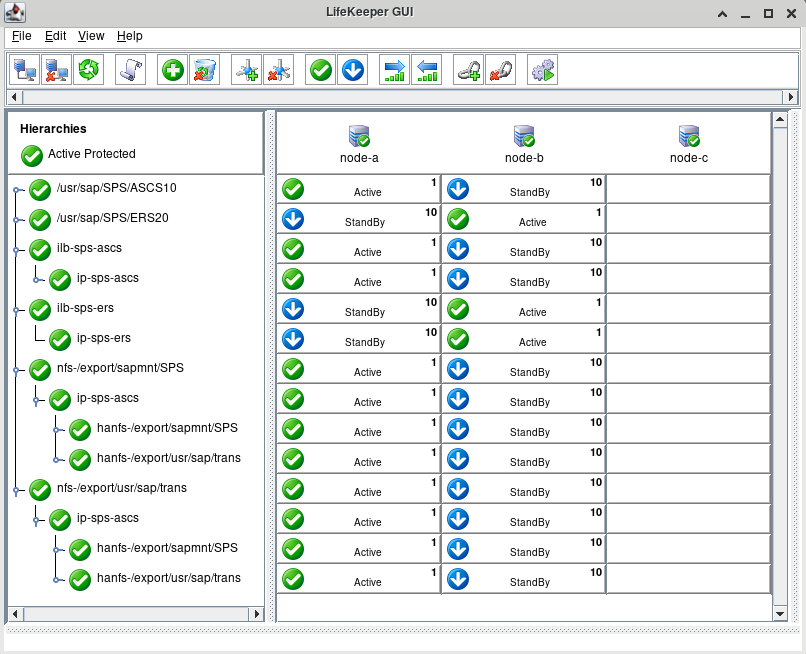

Once the NFS resource hierarchies have been created successfully, the LifeKeeper GUI should resemble the following image.

- Right-click on the nfs-/export/sapmnt/SPS resource and select Delete Dependency… from the drop-down menu. For Child Resource Tag, select ip-sps-ascs. Click Next> to continue, then click Delete Dependency to delete the dependency.

- Right-click on the nfs-/export/sapmnt/SPS resource and select Create Dependency… from the drop-down menu. For Child Resource Tag, select ilb-sps-ascs. Click Next> to continue, then click Create Dependency to create the dependency.

- Right-click on the nfs-/export/usr/sap/trans resource and select Delete Dependency… from the drop-down menu. For Child Resource Tag, select ip-sps-ascs. Click Next> to continue, then click Delete Dependency to delete the dependency.

- Right-click on the nfs-/export/usr/sap/trans resource and select Create Dependency… from the drop-down menu. For Child Resource Tag, select ilb-sps-ascs. Click Next> to continue, then click Create Dependency to create the dependency.

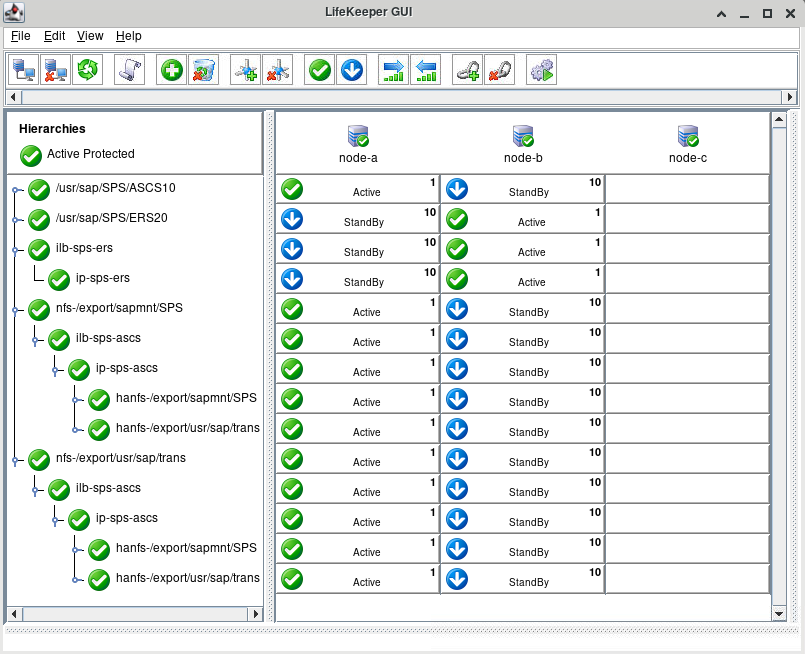

Once these steps are complete, the LifeKeeper GUI should resemble the following image.

Add Mount Entries to /etc/fstab

- Execute the following commands on node-a, node-b, node-d, and node-e (the ASCS, ERS, PAS, and AAS instance hosts) to mount sps-ascs:/export/sapmnt/SPS at /sapmnt/SPS during boot:

# echo "sps-ascs:/export/sapmnt/SPS /sapmnt/SPS nfs nfsvers=3,proto=tcp,rw,sync,bg 0 0" >> /etc/fstab # mount /sapmnt/SPS

- Execute the following commands on node-d and node-e (the PAS and AAS instance hosts) to mount sps-ascs:/export/usr/sap/trans at /usr/sap/trans during boot:

# echo "sps-ascs:/export/usr/sap/trans /usr/sap/trans nfs nfsvers=3,proto=tcp,rw,sync,bg 0 0" >> /etc/fstab # mount /usr/sap/trans

Modify /etc/default/LifeKeeper

Add the line ‘SAP_NFS_CHECK_DIRS=/sapmnt/SPS’ to /etc/default/LifeKeeper on node-a and node-b to allow the SAP Recovery Kit to monitor the availability of the sps-ascs:/export/sapmnt/SPS NFS share before performing administrative tasks that require access to files found on the shared file system.

Also add the line ‘NFS_RPC_PROTOCOL=tcp’ to /etc/default/LifeKeeper on node-a and node-b to ensure that the pingnfs utility uses the TCP protocol when checking the availability of the NFS shares.

# vi /etc/default/LifeKeeper

# grep 'SAP_NFS_CHECK_DIRS=\|NFS_RPC_PROTOCOL=' /etc/default/LifeKeeper

SAP_NFS_CHECK_DIRS=/sapmnt/SPS

NFS_RPC_PROTOCOL=tcpThe shared file systems are now prepared for installation of the SAP instances.

Post your comment on this topic.