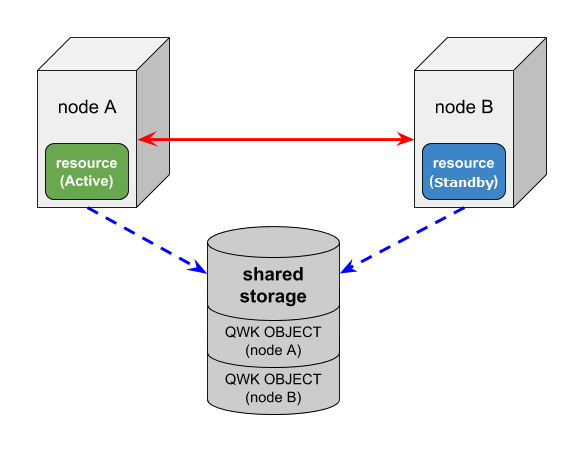

With this mode each node writes information about itself to a shared storage device on a regular basis and periodically reads the information written by the other nodes. A cluster is considered to have quorum consensus when each node is able to access the shared storage device and update its quorum object as well as see that the quorum objects for all other nodes are being updated. The node information located on the shared storage device is called a quorum (QWK) object or QWK object for short. QWK objects are required for every node configured in the cluster.

Quorum checking determines that a node has quorum when it has access to the shared storage device. Witness checking accesses the QWK objects for the other nodes to determine that node’s current state. During a check it is verifying that updates to the QWK objects of the other nodes are still occurring on a regular basis. If no updates have occurred on a particular node after a certain period of time, the node will be considered in a failed state. During this time the checking node will update its own QWK object. Witness checking is performed when quorum checking is performed.

When “storage” is selected for quorum mode, “storage” must be selected for witness mode.

This quorum mode setting can be used for a two-node, three node, or four node cluster. The shared storage used for storing QWK objects for all the nodes must be configured separately. If a node loses access to the shared storage, it affects bringing resources in service. Select a shared storage device which is always accessible from all the nodes.

Available Shared Storage

The purpose of the quorum/witness function is to avoid a split brain scenario. Therefore, correctly configuring the storage quorum mode choice is critical to ensure all nodes in the cluster can see all the QWK objects. This is accomplished by placing all the QWK objects in the same type of shared storage: block devices, regular files, or S3 objects.

The available shared storage choices are shown below. Specify the type of shared storage being used via the QWK_STORAGE_TYPE setting in the /etc/default/LifeKeeper configuration file.

| block | When using physical storage, RDM (physical compatibility), iSCSI (in-VM initiator) for shared storage, allocate one QWK object in one of the following ways: (a) 1 QWK object = 1 partition (b) 1 QWK object = 1 LU In the case of (a), since multiple hosts will write to one LU, align the offset with 4K (sector size of the storage device) within the LU of the partition. Also, do not mix partitions used for other purposes. |

| When using VMDK for shared storage, allocate one QWK object as follows: 1 QWK object = 1 VMDK Do not create partitions. Also, no file system needs to be created. thick (eager zeroed) |

|

| file | When using NFS for shared storage, allocate one QWK object as follows: 1 QWK object = 1 regular file system in the NFS file system Set the export option for the NFS server as follows: rw,no_root_squash,sync,no_wdelay Set the mount option for the NFS server as follows: soft,timeo=20,retrans=1,intr,noac Configure /etc/fstab to mount automatically after rebooting the OS. |

| aws_s3 | When using Amazon Simple Storage Service (S3) for shared storage, allocate one QWK object as follows: 1 QWK object = 1 S3 object Use S3 in a region different from the region where LifeKeeper is running. Also, due to the Amazon S3 Data Consistency Model, the old data may be returned if the request is made right after updating the QWK objects; therefore, two QWK objects can be specified on one node when using S3(this is only available with S3).

Note: If the path name for the AWS CLI executable files are not already specified as a part of the “PATH” parameter in the LifeKeeper defaults file /etc/default/LifeKeeper, you must append the path to the AWS CLI exectuables for LifeKeeper to function correctly when using S3 objects. |

The size of 1 QWK object is 4096 bytes.

Quorum witness checking performs a read and/or /write to its own QWK object and will only read the QWK objects of other nodes. Set the access rights appropriately (be careful of permission restrictions such as granting Persistent Reservation to the shared storage).

Storage Mode Configuration

QUORUM_MODE and WITNESS_MODE should be configured as storage in the /etc/default/LifeKeeper configuration file. The following configuration parameters are also available when using storage:

- QWK_STORAGE_TYPE – Specifies the type of shared storage being used.

- QWK_STORAGE_HBEATTIME – Specifies the interval in seconds between reading and writing the QWK objects.

- QWK_STORAGE_NUMHBEATS – Specifies the number of consecutive heartbeat checks that when missed indicates the target node has failed. A missed heartbeat occurs when the QWK object has not been updated since the last check.

- QWK_STORAGE_OBJECT_ – Specifies the path to the QWK object for each node in the cluster. Entries for all nodes in the cluster are required.

- HTTP_PROXY, HTTPS_PROXY, NO_PROXY – Set this parameter when using HTTP proxy for accessing the service endpoint. The value set here will be passed to AWS CLI.

See the “Quorum Parameter List” for more information.

How to use Storage Mode

Initialization is required in order to use the storage quorum mode. The initialization steps for all the nodes in the cluster are as follows.

- Set up all the nodes and make sure that they can communicate with each other.

- On all the nodes run the SPS for Linux setup and enable “Use Quorum/Witness Functions” to install the Quorum/Witness package.

- Create communication paths between all the nodes.

- Configure the quorum setting in the /etc/default/LifeKeeper configuration file on all nodes.

- Run the qwk_storage_init command on all nodes. This command will wait until the initialization of the QWK objects on all nodes is complete. Quorum/Witness functions will become available in the storage mode once the init completes on all nodes.

Reinitialization is necessary to add/delete cluster nodes after initial configuration, or when quorum parameters are changed in the /etc/default/LifeKeeperconfiguration file. Please reinitialize according to the following steps.

- Execute the qwk_storage_exit command on all nodes.

- Delete communication paths between the node that is being deleted and all the other nodes.

Create communication paths between the node that is being added and all the other nodes. - Modify the quorum parameters in the /etc/default/LifeKeeper configuration file on all nodes.

- Execute the qwk_storage_init command on all nodes.

Troubleshooting

The frequent logging of message ID 135802 in the lifekeeper.log indicates that the periodic reading and writing of the QWK objects is overloaded and causing a delay in processing. If the number of nodes in the cluster is large and S3 is used for the shared storage (especially when used in two regions), the load from reading and writing of the QWK objects can be high.

Follow these steps to avoid the frequent logging of message ID 135802 in the lifekeeper.log.

- Increase the value of QWK_STORAGE_HBEATTIME (the time required for a failure to be detected and for a failover to begin will increase)

- If the shared storage choice is S3, use only in regions with the lowest network latency.

- Increase the throughput (for Amazon EC2 instances, change the instance type, etc.)

Expected Behaviors for Storage Mode (Assuming Default Modes)

Behavior of a two-node cluster; Node A (resources are in-service) and Node B (resources are on stand-by), is shown below.

The following three events may change the resource status on a node failure:

- COMM_DOWN event

An event called when all the communication paths between the nodes are disconnected.

- COMM_UP event

An event called when the communication paths are recovered from a COMM_DOWN state.

- LCM_AVAIL event

An event called after LCM initialization is completed and it is called only once when starting LifeKeeper. Once this state has been reached heartbeat, transmission to other nodes in the cluster begins over the established communication paths. It is also ready to receive heartbeat requests from other nodes in the cluster. LCM_AVAIL will always processed before processing a COMM_UP event.

Scenario 1

The communication paths fail between Node A and Node B (Both Node A and Node B can access the shared storage)

In this case, the following will happen:

- Both Node A and Node B will begin processing a COMM_DOWN event, though not necessarily at exactly the same time.

- Both nodes will perform the quorum check and determine that they still have quorum (both A and B can access the shared storage).

- Each node will check the QWK object for the node with whom it has lost communication to see if it is still being updated on a regular basis. Both nodes will find that the other’s QWK object is being updated on a regular as both nodes are still running witness checks.

- It will be determined, via the witness checking on each node, that the other is still alive so no failover processing will take place. Resources will be left in service at Node A.

Scenario 2

Node A fails and stops

In this case, Server B will do the following:

- Begin processing a COMM_DOWN event from Node A.

- Determine that it can still access the shared storage and thus has quorum.

- Check to see that updates to the QWK object for Node A have stopped (witness checking).

- Verify via witness checking that Node A really appears to be lost and begins the usual failover activity. Node B will continue processing and bring the protected resources in service.

With resources being in-service on Node B, Node A is powered on and establishes communications with the other nodes and is able to access the QWK shared storage

In this case, Node A will process a LCM_AVAIL event. Node A will determine that it has quorum and not bring resources in service because they are currently in service on Node B. Next, a COMM_UP event will be processed between Node A and Node B.

Each node will determine that it has quorum during the COMM_UP events and Node A will not bring resources in service because they are currently in service on Node B.

With resources being in-service on Node B, Node A is powered on and cannot establish communications to the other nodes but is able to access the QWK shared storage

In this case, Node A will process a LCM_AVAIL event. Node A will determine that it has quorum since it can access the shared storage for the QWK objects. It will then perform witness checks to determine the status for Node B since the communication to Node B is down. Since Node B is running and has been updating its QWK object, Node A detects this and does not bring resources in service. Node B will do nothing since it can’t communicate with Node A and already has the resource in-service.

Scenario 3

A failure occurs with the network for Node A (Node A is running without communication paths to the other nodes and does not have access to the QWK objects on shared storage)

In this case, Node A will do the following:

- Begin processing a COMM_DOWN event from Node B.

- Determine that it cannot access the shared storage and thus does not have quorum.

- Immediately force-quit (“fastkill”, default behavior of QUORUM_LOSS_ACTION).

Also, in this case, Node B will do the following:

- Begin processing a COMM_DOWN event from Node A.

- Determine that it can still access the shared storage and thus has quorum.

- Verify that the updating for the QWK objects for Node A has stopped (witness checking).

- Verify via witness checking that Node A really appears to be lost and, begin the usual failover activity. Node B will now have the protected resources in service.

With resources being in-service on Node B, Node A is powered on and establishes communications with the other nodes and is able to access the QWK shared storage

Same as scenario 2.

With the resources being in-service on Node B, Node A powered-on but is not able to access the QWK shared storage

In this case, Node A will process an LCM_AVAIL event. Node A will determine that it does not have quorum and will not bring resources in service.

If the communication paths to Node B are available, then a COMM_UP event will be processed. However, because Node A does not have quorum, it will not bring resources in service.

Post your comment on this topic.