SIOS DataKeeper employs both asynchronous and synchronous mirroring schemes. Understanding the advantages and disadvantages between synchronous and asynchronous mirroring is essential to the correct operation of SIOS DataKeeper.

Synchronous Mirroring

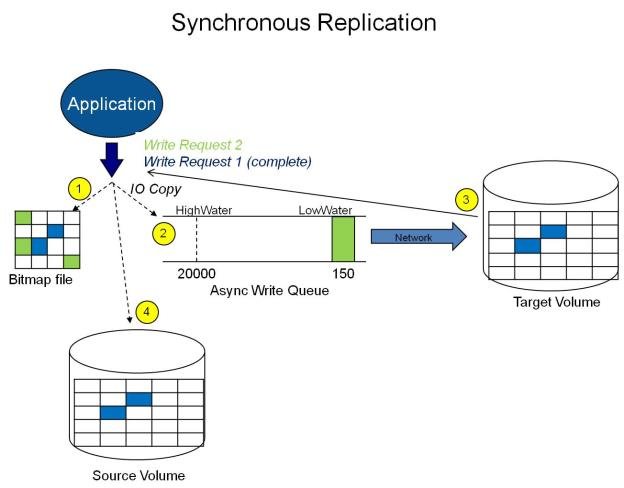

With synchronous mirroring, each write is intercepted and transmitted to the target system to be written on the target volume at the same time that the write is committed to the underlying storage device on the source system. Once both the local and target writes are complete, the write request is acknowledged as complete and control is returned to the application that initiated the write. Persistent bitmap file on the source system is updated.

The following sequence of events describes what happens when a write request is made to the source volume of a synchronous mirror.

- The following occur in parallel.

a. A copy of the write is put on the mirror Write Queue.

b. The write is sent to the local volume for completion.

- The write returns a completion status to the caller after both operations above complete.

a. If any condition prevents the write from completing on the Target (HighWater or QueueByteLimit reached, network transmission error, or write error on the target system), the mirror state is changed to Paused. However, the status of the volume write which is returned to the caller is not affected.

b. The status of the local volume write is returned to the caller.

In this diagram, Write Request 1 has already completed. Both the target and the source volumes have been updated.

Write Request 2 has been sent from the application and the write is about to be written to the target volume. Once written to the target volume, DataKeeper will send an acknowledgment that the write was successful on the target volume, and in parallel, the write is committed to the source volume.

At this point, the write request is complete and control is returned to the application that initiated the write.

While synchronous mirroring insures that there will be no data loss in the event of a source system failure, synchronous mirroring can have a significant impact on the application’s performance, especially in WAN or slow network configurations, because the application must wait for the write to occur on the source and across the network on the target.

Asynchronous Mirroring

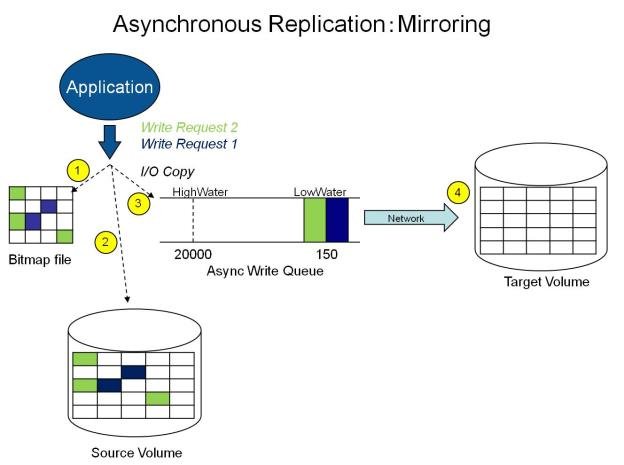

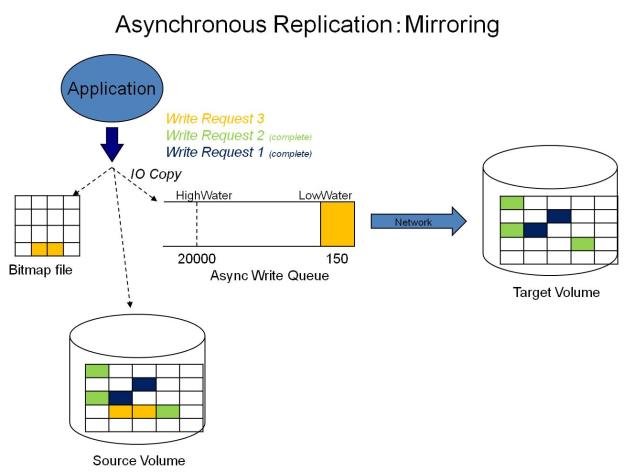

In most cases, SIOS recommends using asynchronous mirroring. With asynchronous mirroring, each write is intercepted and a copy of the data is made. That copy is queued to be transmitted to the target system as soon as the network will allow it. Meanwhile, the original write request is committed to the underlying storage device and control is immediately returned to the application that initiated the write.

To maintain data consistency across multiple volumes (such as database Log and Data files), some applications send Flush requests to the volume. DataKeeper honors Flush requests on a volume with a mirror in the Mirroring state by waiting for all writes in the queue to be sent to the target system and acknowledged. To prevent performance from being impacted in such cases, the registry entry “DontFlushAsyncQueue” may be set, or you may consider locating all files on the same volume.

At any given time, there may be write transactions waiting in the queue to be sent to the target machine. But it is important to understand that these writes reach the target volume in time order, so the integrity of the data on the target volume is always a valid snapshot of the source volume at some point in time. Should the source system fail, it is possible that the target system did not receive all of the writes that were queued up, but the data that has made it to the target volume is valid and usable.

The following sequence of events describes what happens when a write request is made to the source volume of an asynchronous mirror.

- Persistent bitmap file on the source system is updated.

- Source system adds a copy of the write to the mirror Write Queue.

- Source system executes the write request to its source volume and returns to the caller.

- Writes that are in the queue are sent to the target system. The target system executes the write request on its target volume and then sends the status of the write back to the primary.

- Should an error occur during network transmission or while the target system executes its target volume write, the write process on the secondary is terminated. The state of the mirror then changes from Mirroring to Paused.

In the diagram above, the two write requests have been written to the source volume and are in the queue to be sent to the target system. However, control has already returned back to the application who initiated the writes.

In the diagram below, the third write request has been initiated while the first two writes have successfully been written to both the source and target volumes. While in the mirroring state, write requests are sent to the target volume in time order. Thus, the target volume is always an exact replica of the source volume at some point in time.

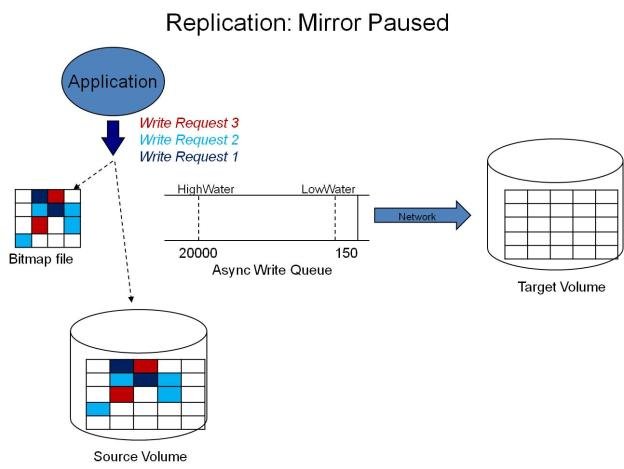

Mirror PAUSED

In the event of an interruption to the normal mirroring process as described above, the mirror changes from the MIRRORING state to a PAUSED state. All changes to the source volume are tracked in the persistent bitmap file only and nothing is sent to the target system.

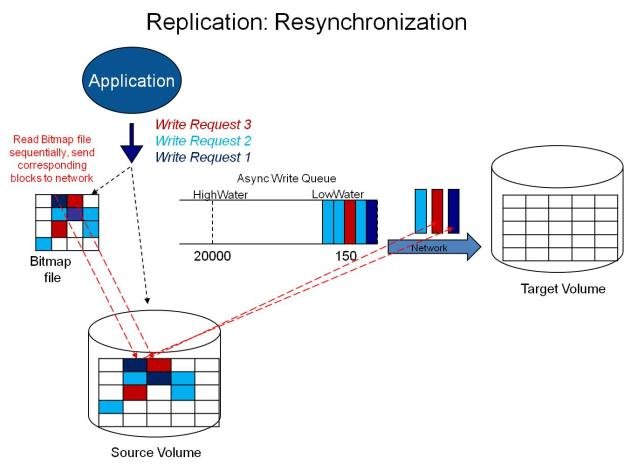

Mirror RESYNCING

When the interruption of either an Asynchronous or Synchronous mirror is resolved, it is necessary to resynchronize the source and target volumes and the mirror enters into a RESYNC state.

DataKeeper reads sequentially through the persistent bitmap file to determine what blocks have changed on the source volume while the mirror was PAUSED and then resynchronizes only those blocks to the target volume. This procedure is known as a partial resync of the data.

The user may notice a Resync Pending state in the GUI, which is a transitory state and will change to the Resync state.

During resynchronization, all writes are treated as Asynchronous, even if the mirror is a Synchronous mirror. The appropriate bits in the bitmap are marked dirty and are later sent to the target during the process of partial resync as described above.

このトピックへフィードバック